The Quiet Infrastructure of Bias

How AI Reproduces Gender Inequality at Scale

Artificial intelligence does not wake up in the morning intending to discriminate.

It does not hold beliefs. It does not consciously prefer men over women. It does not deliberately sexualize, minimize, or exclude.

And yet, again and again, AI systems reproduce the same hierarchies that have shaped society for centuries.

The problem is not intention. The problem is infrastructure.

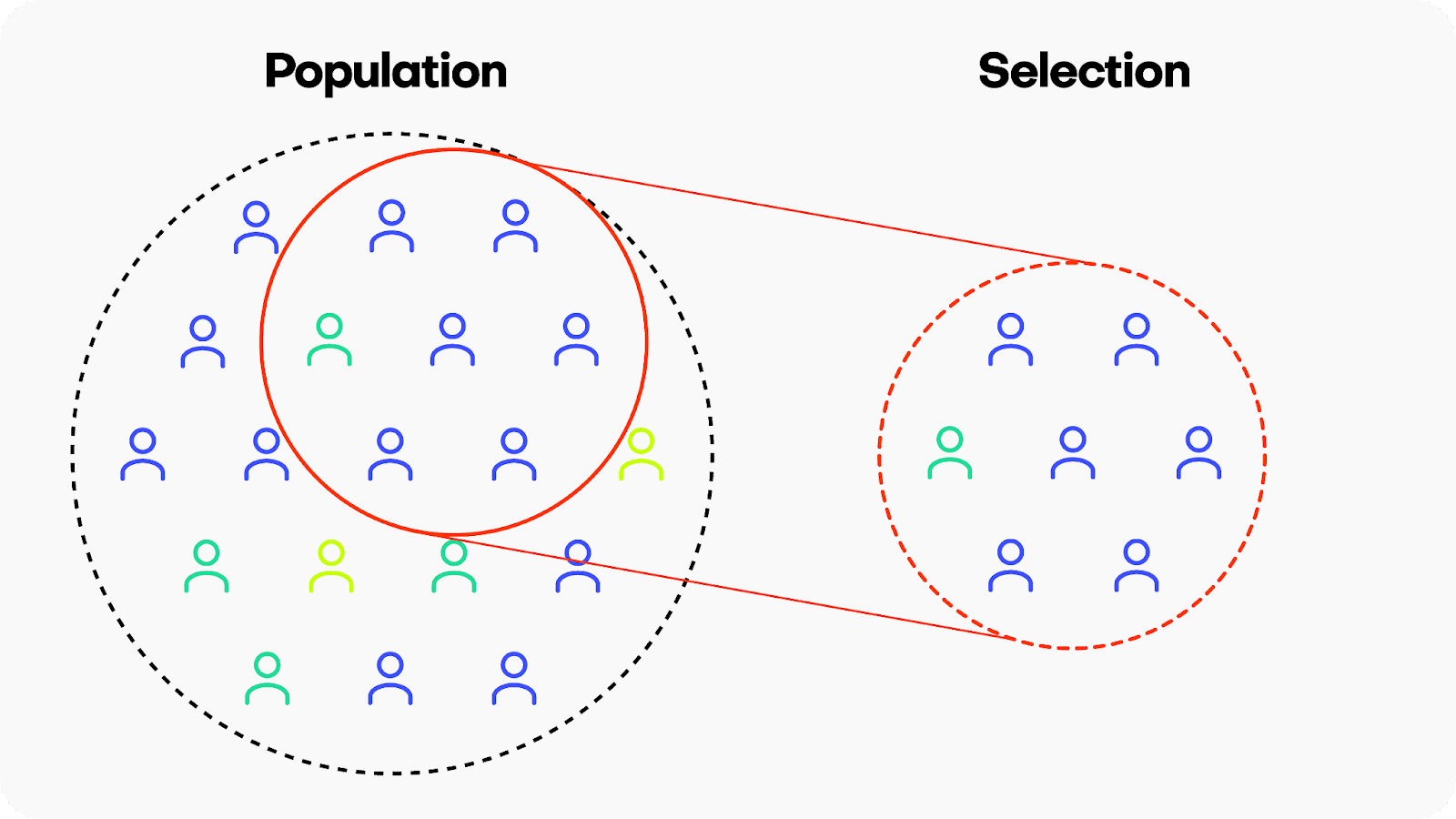

AI systems are trained on enormous datasets: scraped text, images, videos, historical records, hiring data, medical data, social media posts. These datasets are not neutral collections of facts. They are archives of human behavior — and human behavior is structured by power.

If leadership roles have historically been dominated by men, the data reflects that. If media coverage quotes male experts more often than female ones, the data reflects that. If women's work has been undervalued or underpaid, the data reflects that too.

When AI systems learn patterns from that data, they are learning the statistical imprint of inequality.

And because AI operates at scale, it can reproduce those patterns faster and more consistently than any individual human ever could.